Acoustic Camera

It used to be a dream to be able to ‘see the sound’ but now you can do exactly that with this acoustic camera system from gfai tech in Berlin.

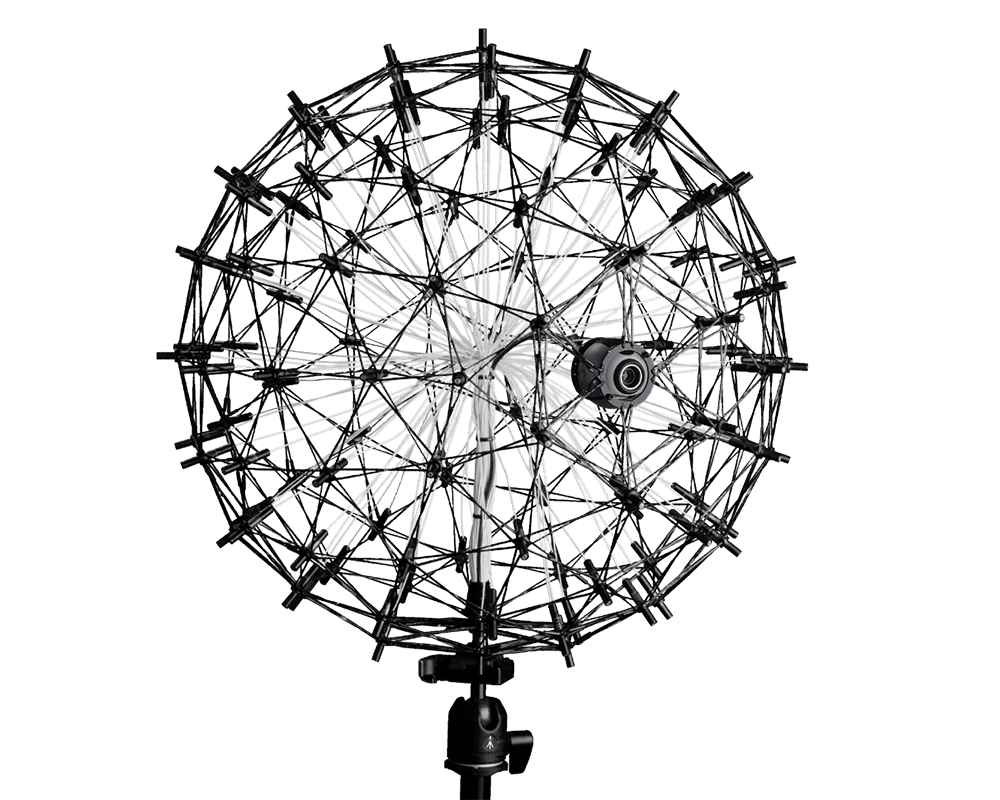

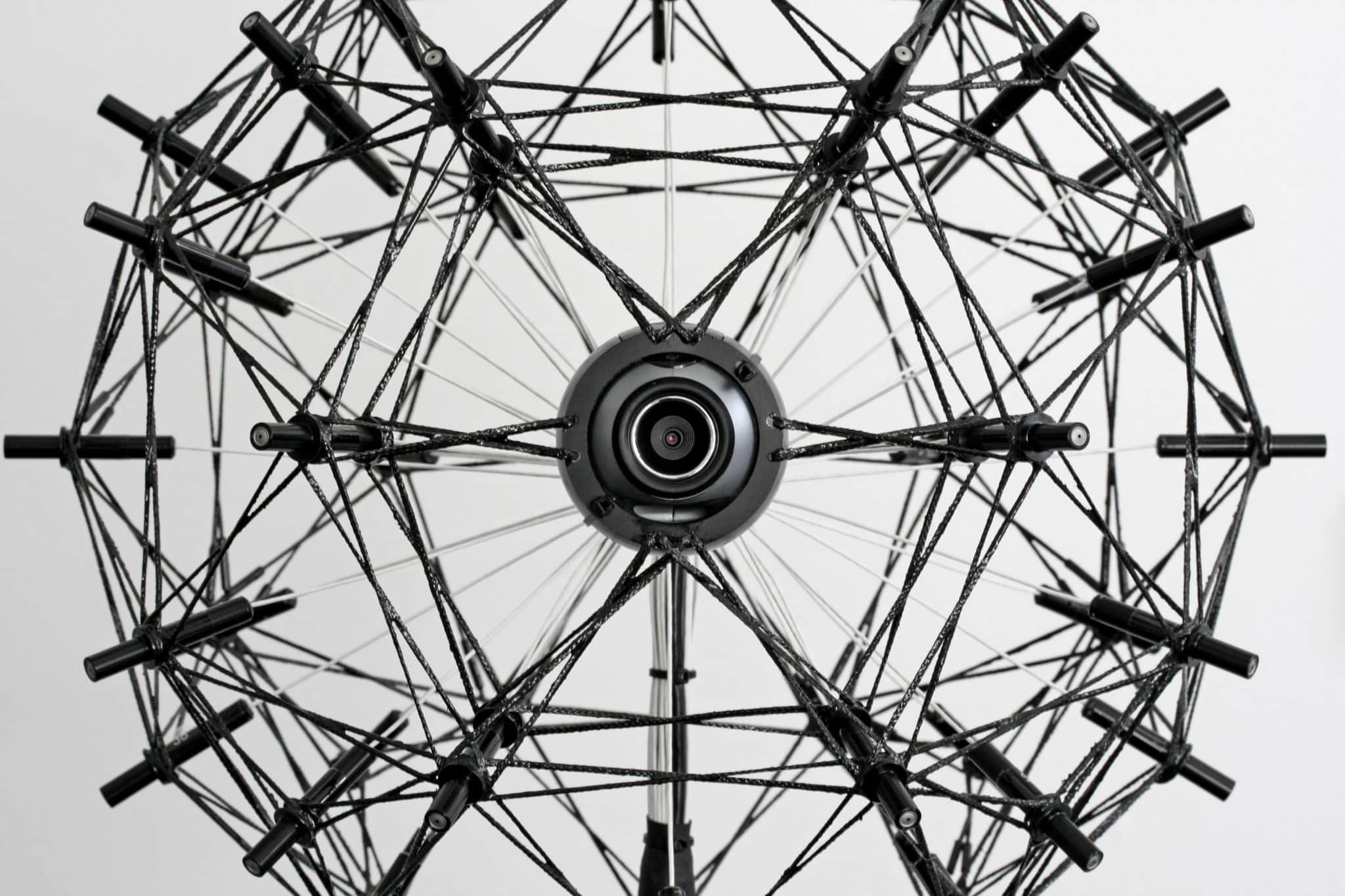

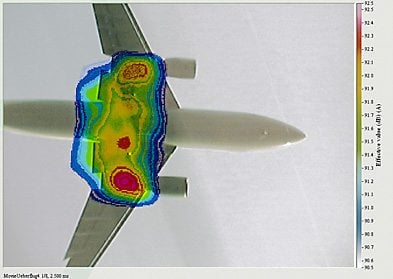

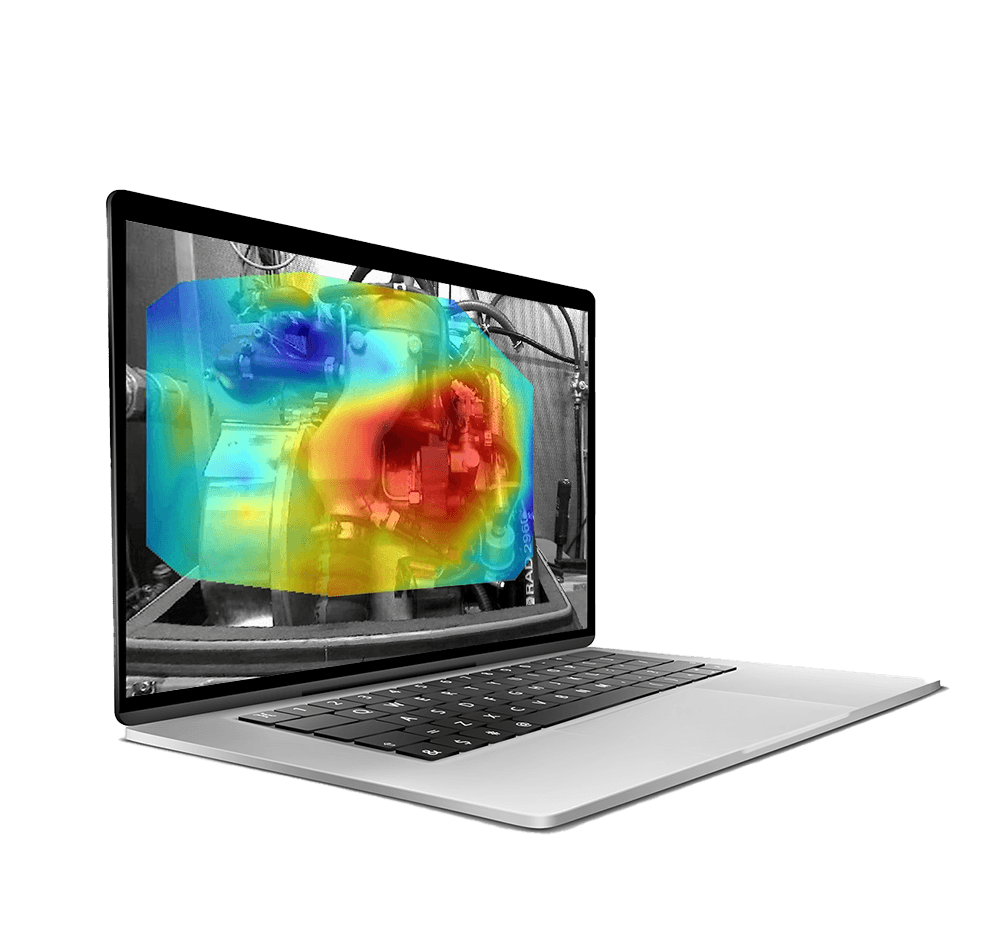

Using an array of microphones, with a patented camera, the system uses a beam-forming technique to locate the sources of sound, and create an image superimposed on an optical image taken at the same time.

Interested

For more information on Acoustic Camera or to request a quote, contact our team now:

The Acoustic Camera from gfai was the first commercially viable system that uses beam-forming techniques to visually localise acoustic emissions. Brought to the market in 2001 as a pioneer technique, the Acoustic Camera has become a metaphor over the years for beam-forming systems in general. The tool is now used in a variety of industries and has a growing customer base worldwide. The acquisition system is high-speed, so images can be taken live or longer ‘exposures’ can reveal more detail and allow post-processing.

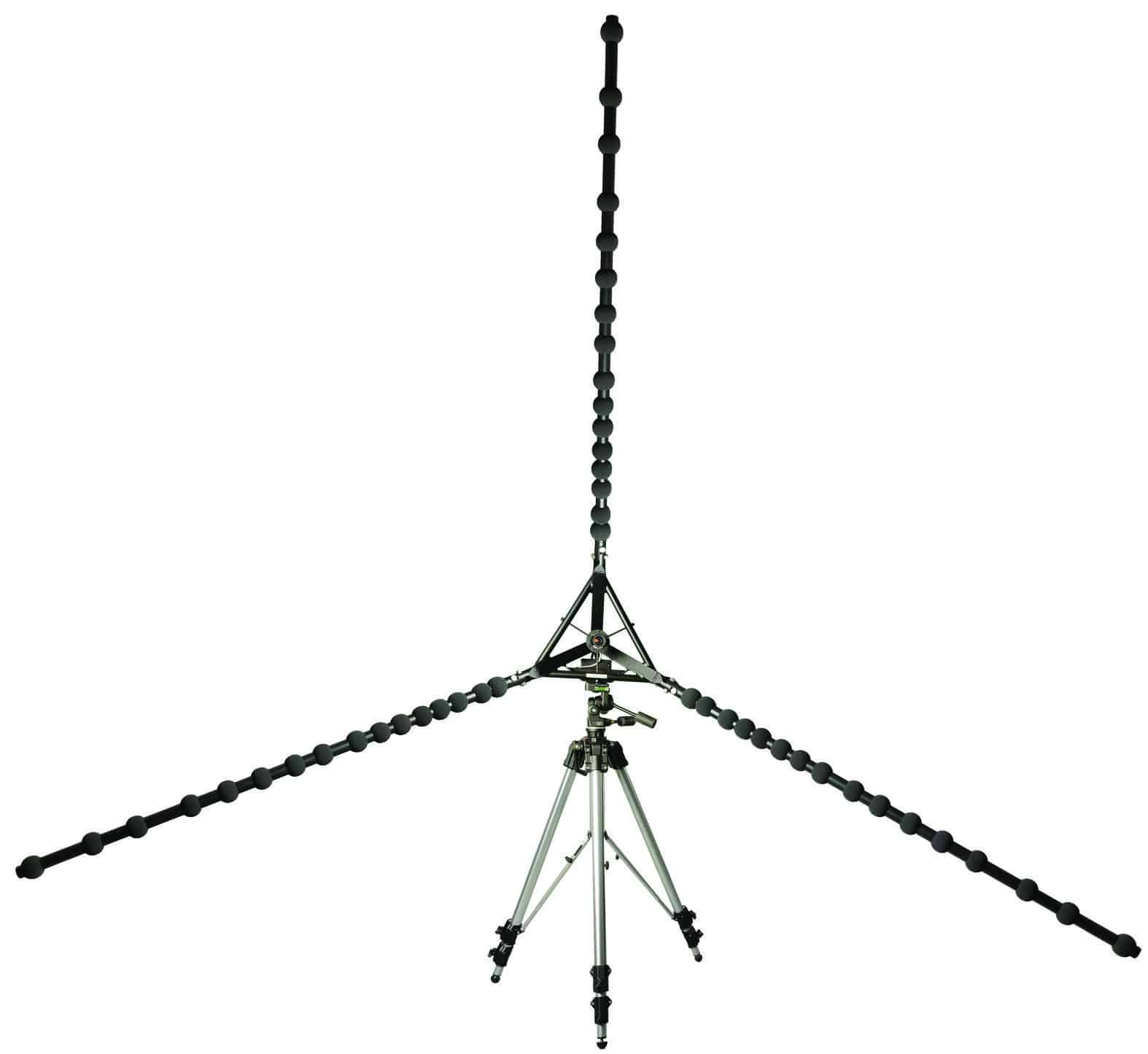

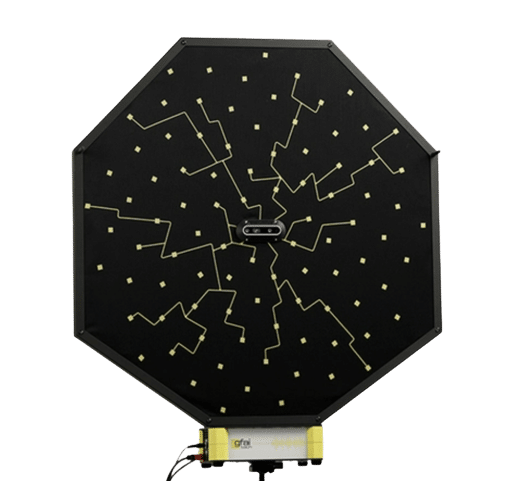

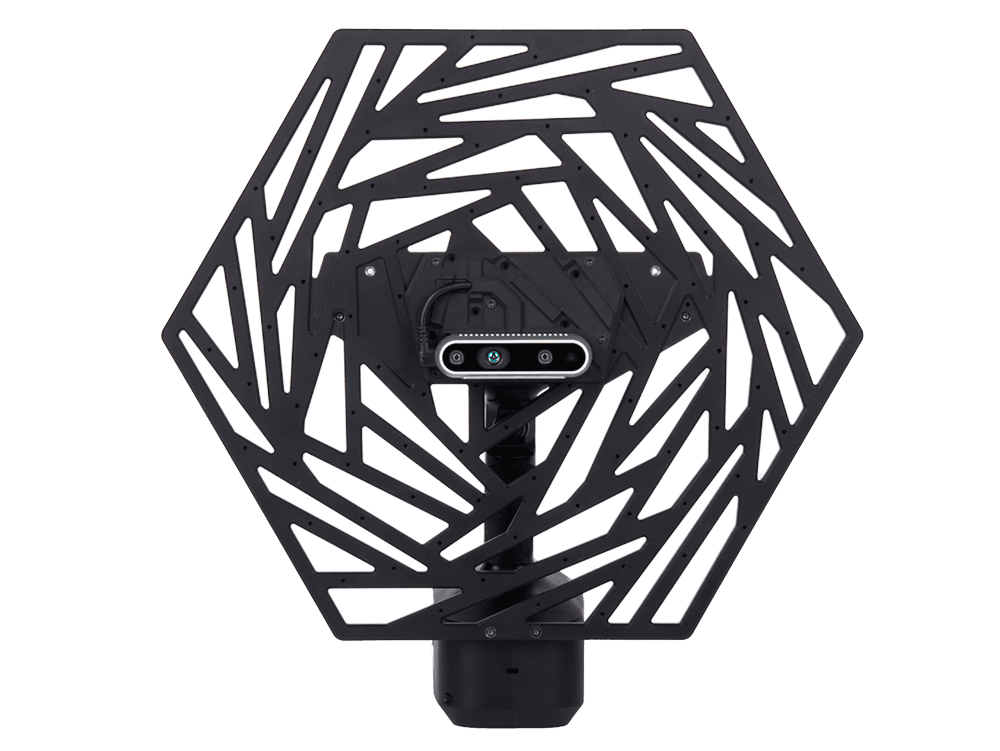

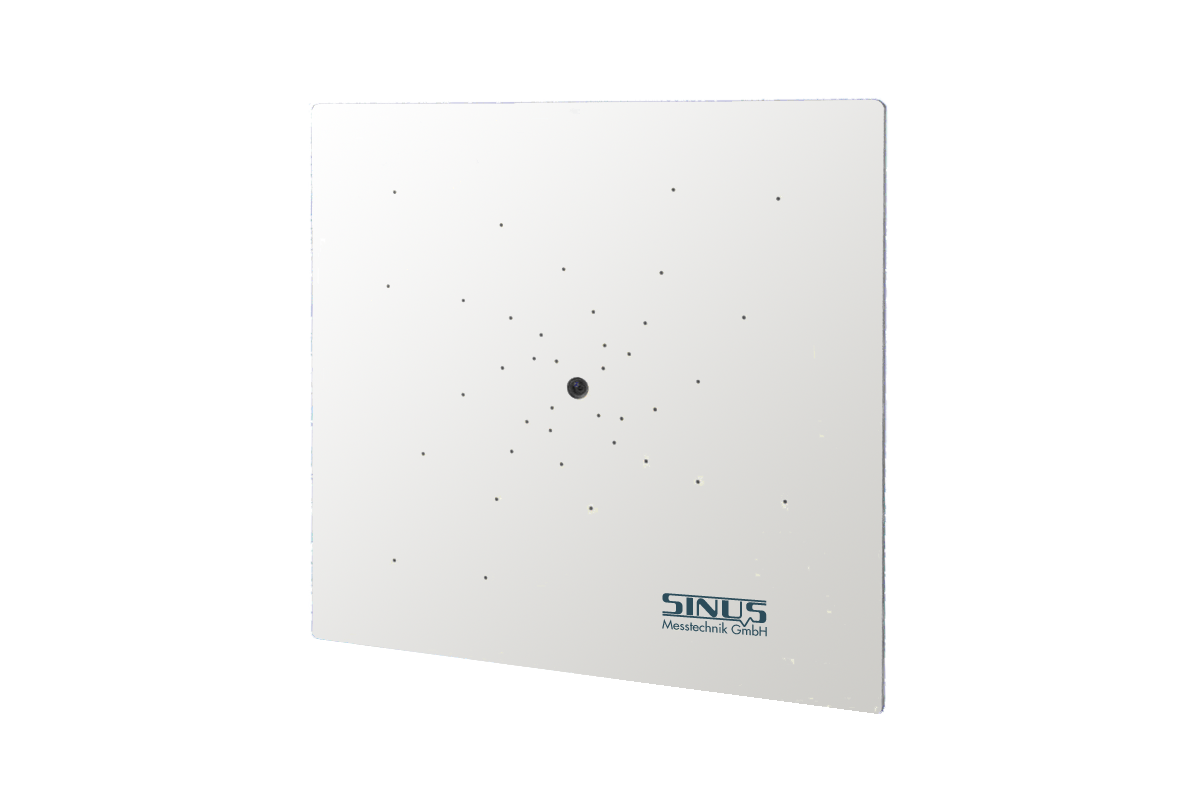

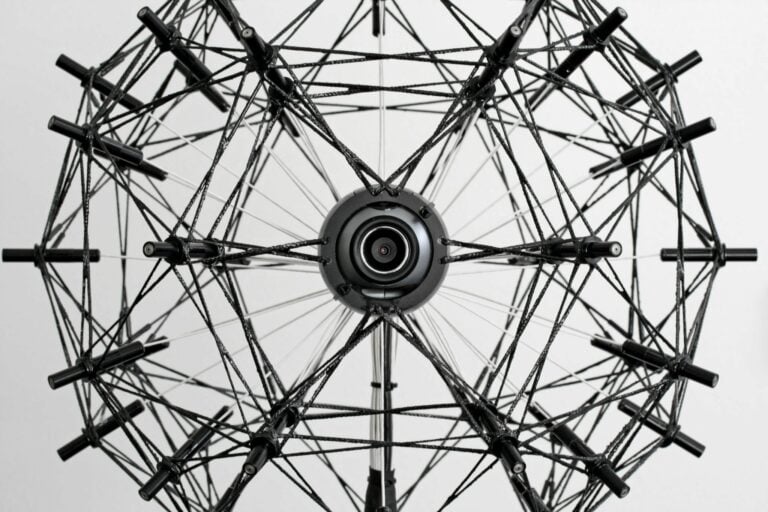

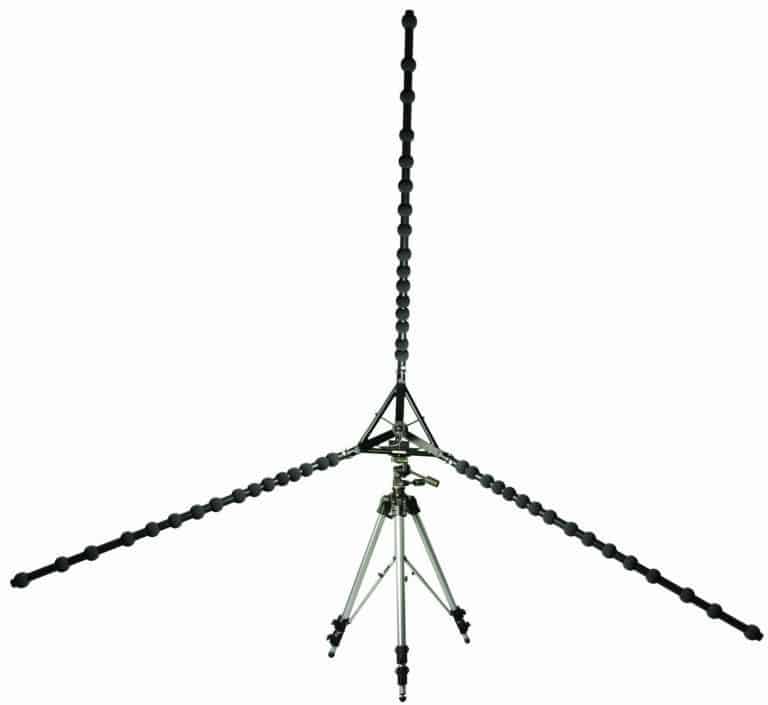

Several arrays are available, depending on frequency range and size of source. For example, the Star array uses three large arms to space the microphones for large environmental sources such as wind turbines or factories. The general purpose array is best for measurements on car engines, or computing devices, and there’s even a small array for measurements on devices like mobile phones.

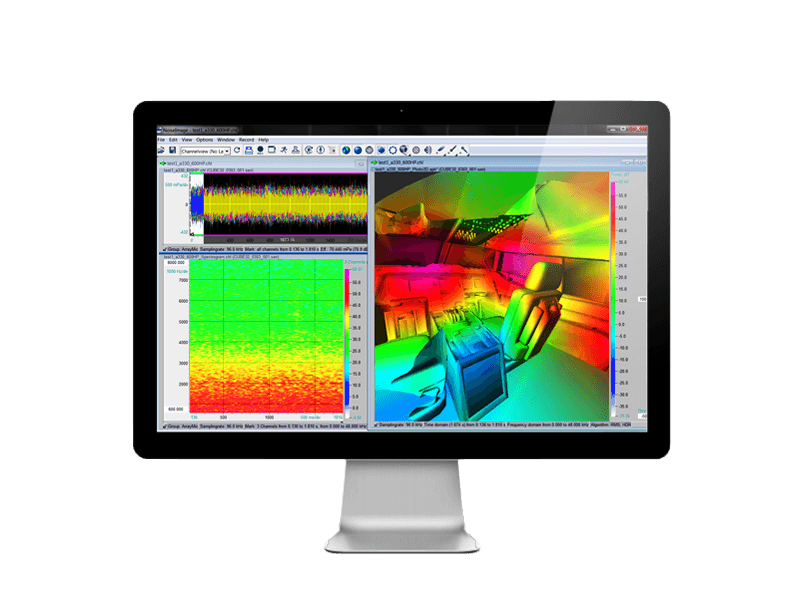

The software is amazingly intuitive, with images popping up within seconds of acquisition, and in-built frequency analysis allows you to home in on hot-spots of sound radiation.

The system can also take ‘acoustic movies’ so you can watch how the sound changes as the source moves. For example, this is very useful for vehicle pass-by, or sources which change with time. You can even visualise the radiation of noise from the tips of a wind-turbine as it goes round!

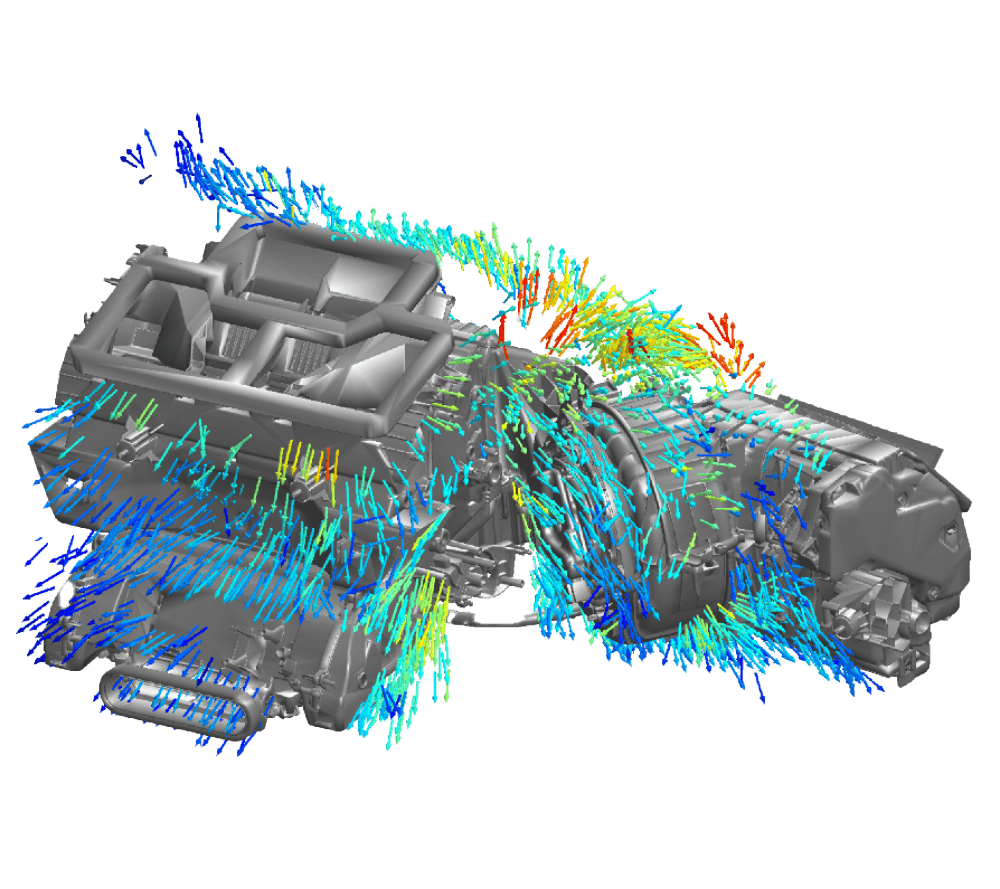

The software also works in 3-D with a special array, so you can see the radiation of sound from complex surfaces. This is a key application inside car cabins, locating the source of squeaks and rattles within seconds.

Extensions include order analysis for rotating machines, and you can also playback the noise from individual ‘acoustic pixels’ to understand the contribution of different source levels.

Delay-And-Sum-Beamforming in the time domain (TDBF)

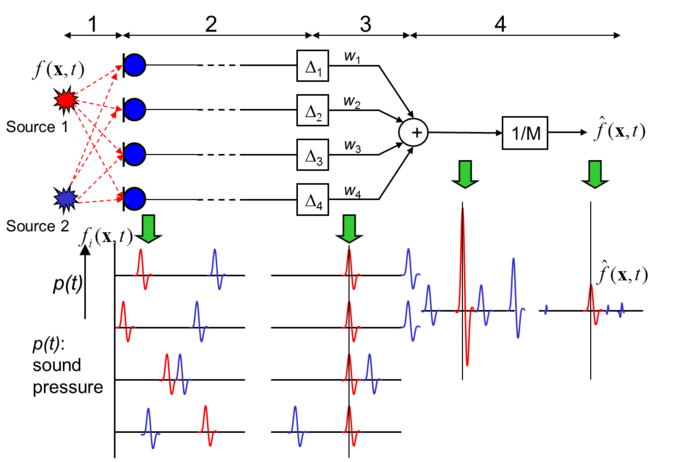

The rationale of the Delay-And-Sum-Beamformer can be described by decomposing the signal processing into four main steps. The block diagram in Figure 1 illustrates the case of two point sources situated in front of the microphone array.

Fig.1: Block diagram beamforming [1/1]

1. The sound of each source travels to every microphone along different paths.

2. The signals captured by the microphones are similar in wave form, but show different delays and phases. Both are proportional to the travelled distances. The delays can be determined from the speed of sound, the distance between the microphones, and the sound sources. In Figure 1, the Beamformer targets the point where source 1 is situated.

3. The signal of each microphone is shifted by a corresponding runtime difference depending on the focus point. As a result, the signal components of source 1 (red impulses) in all channels are in phase, whereas the signal components originating from source 2 (blue impulses) are out of phase.

4. The signals of all channels are summed together and finally, the sum signal is normalised by the number of microphone channels. The result of this process fBF(x,t)fBF(x,t) is illustrated above (see Figure 1). The amplitude of the signal component of source 1 (red) in the sum signal is as strong as the original amplitude of source 1 and the signal components originating from source 2 (blue) are negligible. The RMS- or maximum value can be calculated from the time signal fBF(x,t)fBF(x,t) and visualized in the acoustic map.

The Acoustic Camera brochure

Array Ring 32-35 AC pro datasheet

Array Ring 48-75 AC pro datasheet

Array Ring 72-120 AC pro datasheet

Array Sphere 48-35 AC pro datasheet

Array Sphere 80-60 AC pro datasheet

Array Sphere 120-60 AC pro datasheet

Array Star 48 AC pro datasheet

FlexStar Array 48 – 120 datasheet

Array Paddle 2×24 AC pro datasheet

SHARE THIS

Related Products

Contact Us

To contact us regarding the

Acoustic Camera

Complete your details below:

Request a quote

To request a quote for the

Acoustic Camera

Complete your details below: